Introduction to LLMs and the OpenAI API in Python

Start using the OpenAI API Python SDK today. Build your first LLM-powered app with runnable code for chat completions, streaming, and token management.

Start using the OpenAI API Python SDK today. Build your first LLM-powered app with runnable code for chat completions, streaming, and token management.

Follow this hands-on LangChain tutorial to master chat models, prompt templates, output parsers, and LCEL chains with runnable Python examples.

Apply prompt engineering fundamentals — zero-shot, few-shot, chain-of-thought, and structured output — to get consistent, reliable results from any LLM.

Learn to build a ReAct agent from scratch in LangGraph with this hands-on guide — wire the think-act-observe loop, add tools, and debug agent...

Build LangGraph agents that call tools with @tool, bind_tools, and ToolNode. Step-by-step examples for web search, database queries, and API calls in Python.

Understand LangGraph nodes edges state and conditional routing through visual diagrams and runnable Python examples you can copy and extend.

Build LangGraph workflows that branch at runtime using conditional edges and routing functions. Route by LLM output, user input, or custom logic with examples.

Discover what is LangGraph, how it compares to LangChain, and when graph-based orchestration is the right choice for building reliable AI agents.

Master LangGraph state management with TypedDict schemas, reducer functions, and add_messages. Learn how nodes share data, merge updates, and track message history.

Complete your LangGraph installation setup in minutes, then build and run your first StateGraph that calls an LLM and returns a response.

Fine-tune LLMs with Unsloth — 2x faster training, 70% less GPU memory. Step-by-step guide with LoRA, QLoRA, code examples, and deployment tips.

Learn LLM evaluation from scratch -- benchmarks, metrics (BLEU, ROUGE, perplexity), LLM-as-judge, and custom pipelines with runnable Python code.

Align LLMs with human preferences using one loss function -- no reward model, no RL. Complete guide with derivation, PyTorch code, and DPO variants.

Learn how LLMs work step by step. Build an inference simulator in Python — tokenize, embed, compute attention, sample, and decode with runnable code...

Build a Python AI chatbot with conversation memory that actually remembers. Raw HTTP tutorial with streaming, 3 hands-on exercises, and complete code you can...

18 min

18 min

Graph RAG is an advanced RAG technique that connects text chunks using vector similarity to build knowledge graphs, enabling more comprehensive and contextual answers...

24 min

24 min

Adaptive RAG is a dynamic approach that automatically chooses the best retrieval strategy based on your question’s complexity – from no retrieval for simple...

21 min

21 min

RAPTOR (Recursive Abstractive Processing for Tree-Organized Retrieval) is an advanced RAG technique that creates hierarchical tree structures from your documents, allowing you to retrieve...

12 min

12 min

Fusion RAG is a technique that combines vector and keyword search scores to find more relevant documents for your system. Instead of relying on...

14 min

14 min

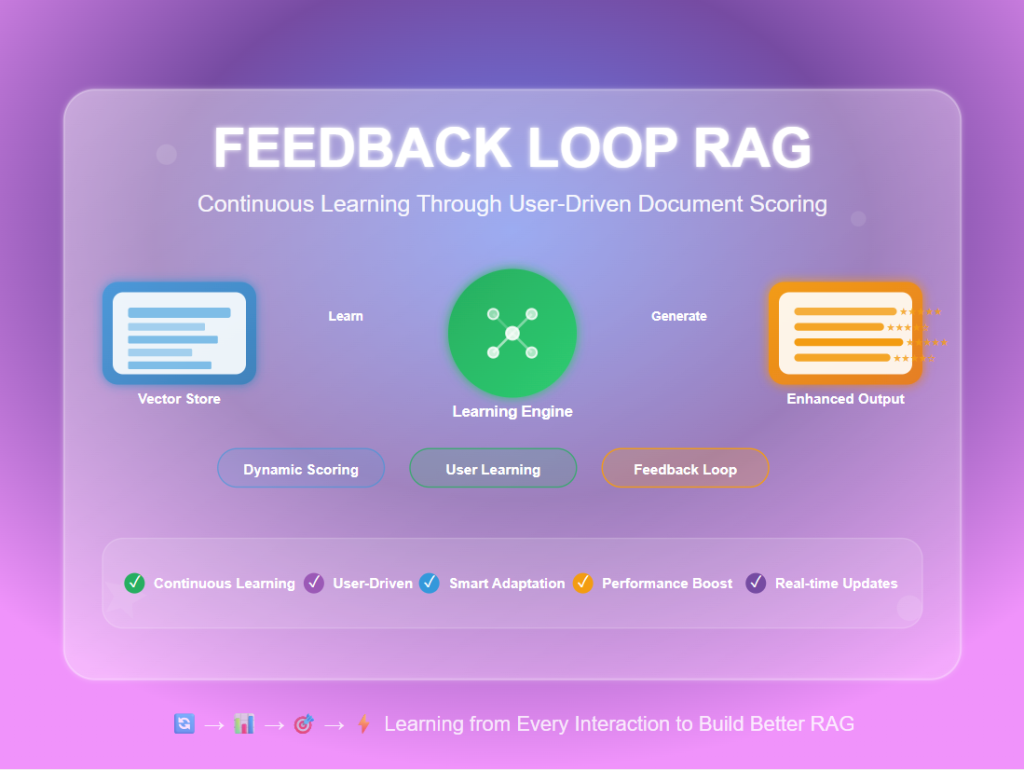

Feedback Loop RAG is an advanced RAG technique that learns from user interactions to continuously improve retrieval quality over time. Unlike traditional RAG systems...

Get the exact 10-course programming foundation that Data Science professionals use.