14 min

14 min

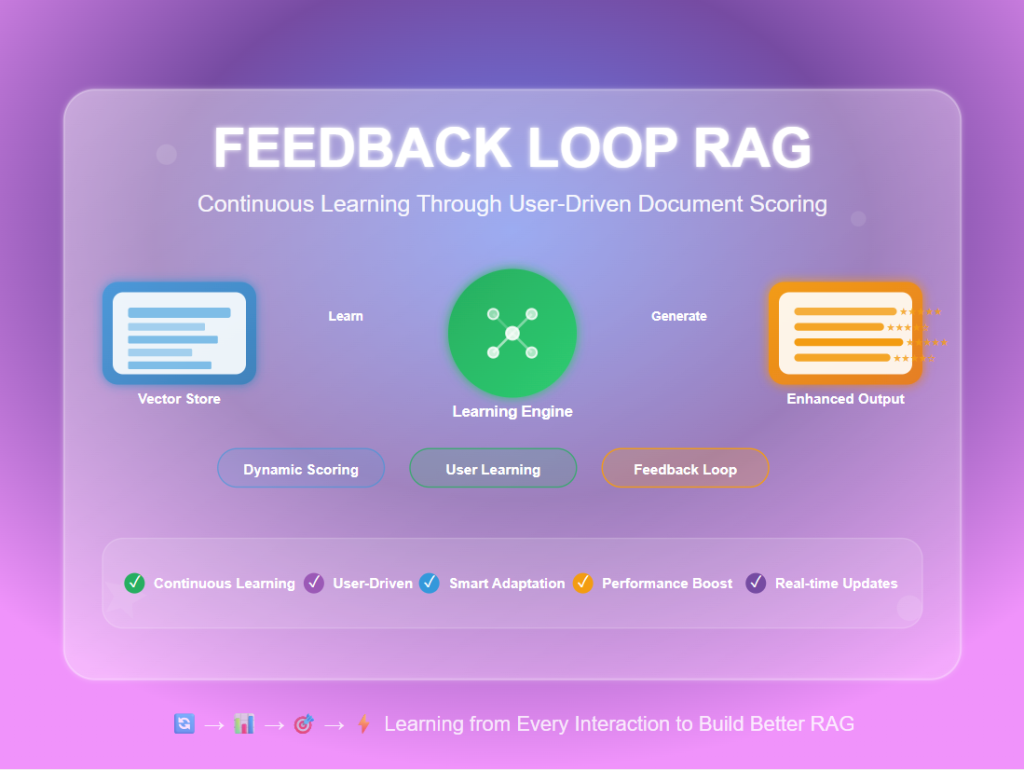

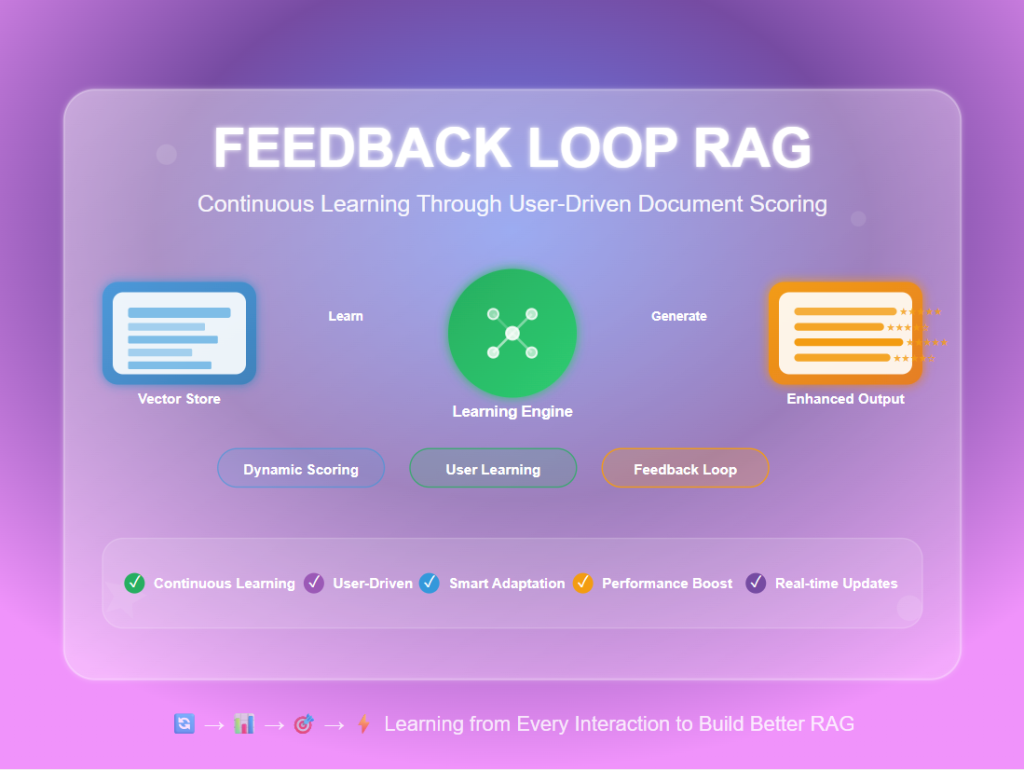

Feedback Loop RAG: Improving Retrieval with User Interactions

Feedback Loop RAG is an advanced RAG technique that learns from user interactions to continuously improve retrieval quality over time. Unlike traditional RAG systems...

14 min

14 min

Feedback Loop RAG is an advanced RAG technique that learns from user interactions to continuously improve retrieval quality over time. Unlike traditional RAG systems...

23 min

23 min

Reliable RAG is an enhanced Retrieval-Augmented Generation approach that adds multiple validation layers to ensure your AI system gives accurate, relevant, and trustworthy answers....

19 min

19 min

Multi-Modal RAG is an advanced retrieval system that processes and searches through both text and visual content simultaneously, enabling AI to answer questions using...

18 min

18 min

Document Augmentation RAG is an advanced retrieval technique that enhances original documents by automatically generating additional context, summaries, questions, and metadata before indexing them...

22 min

22 min

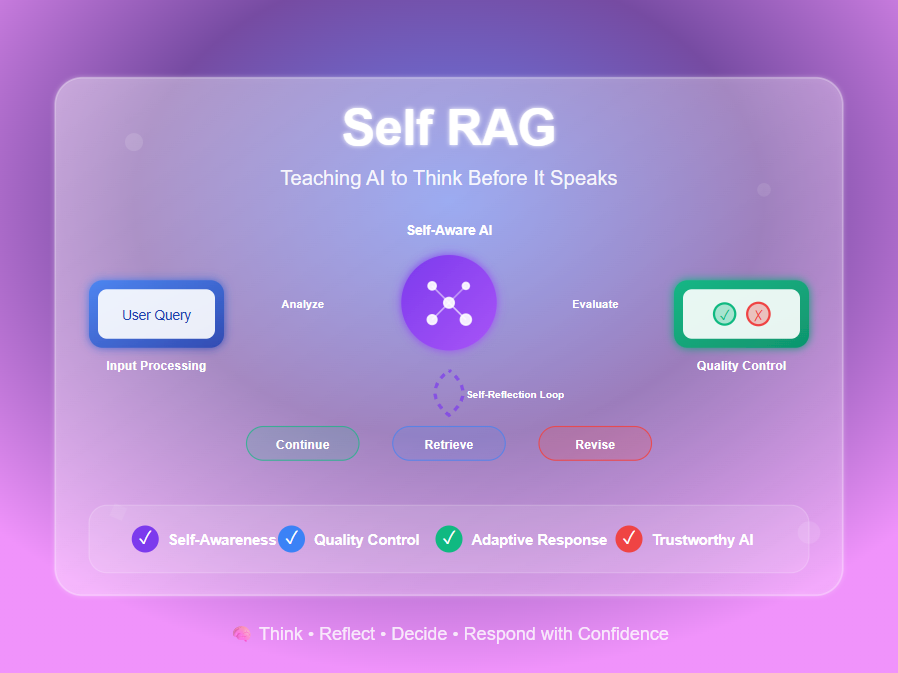

Self RAG (Self-Reflective Retrieval-Augmented Generation) is an advanced AI technique that teaches language models to critique their own performance during the generation process. Instead...

16 min

16 min

Corrective RAG (CRAG) is an advanced RAG technique that adds a quality check layer to your retrieval system. Instead of blindly trusting retrieved documents,...

14 min

14 min

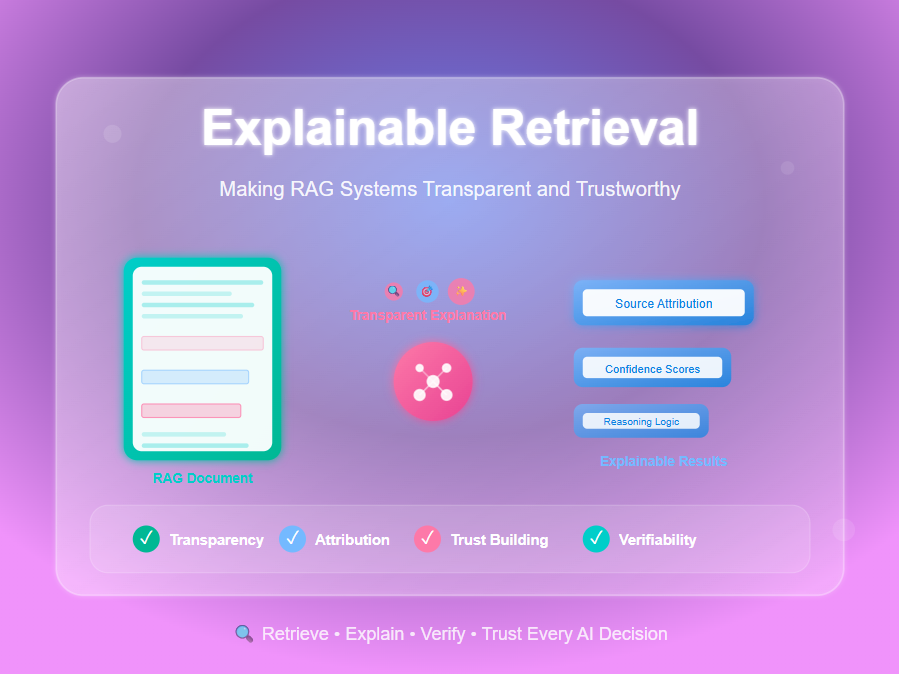

Explainable Retrieval is a technique that makes RAG (Retrieval-Augmented Generation) systems transparent by showing users which documents were retrieved, why they were chosen, and...

22 min

22 min

Hybrid Search-RAG combines vector embeddings for semantic understanding with traditional keyword search for exact matches, giving you the best of both worlds when retrieving...

14 min

14 min

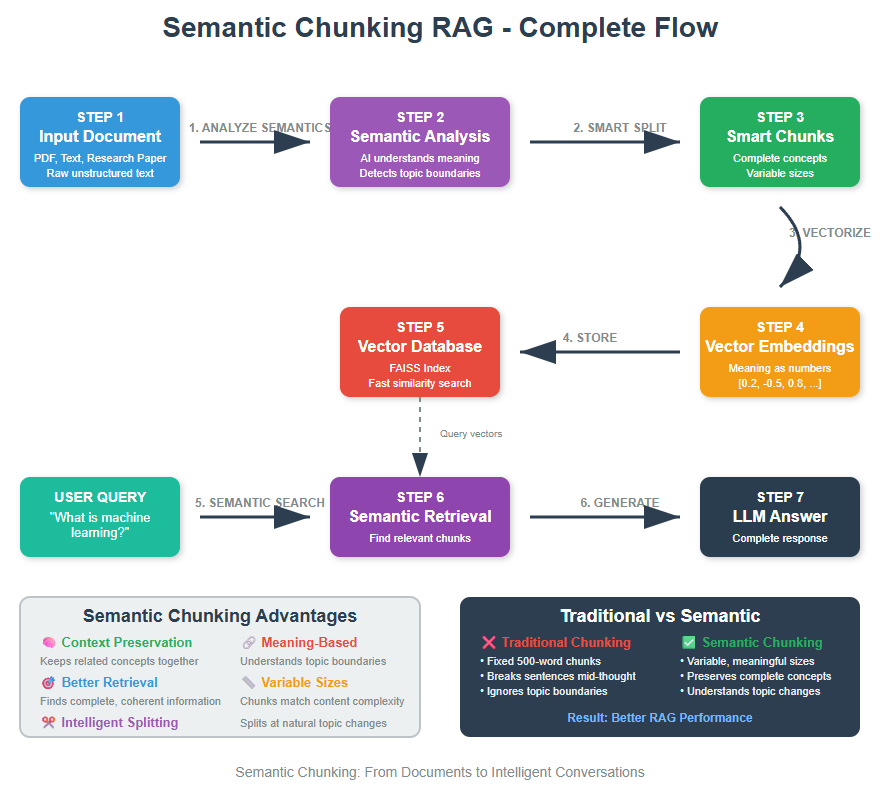

Semantic Chunking is a context-aware text splitting technique that groups sentences by meaning rather than splitting by fixed sizes. This preserves semantic relationships and...

14 min

14 min

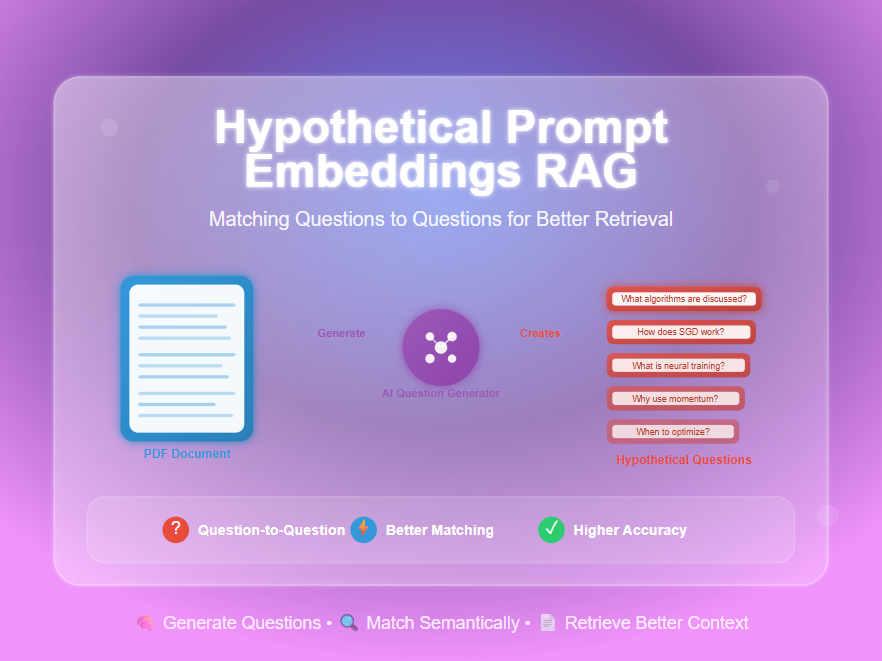

HyPE (Hypothetical Prompt Embeddings) is an advanced RAG enhancement technique that precomputes hypothetical questions for each document chunk during indexing rather than generating content...

14 min

14 min

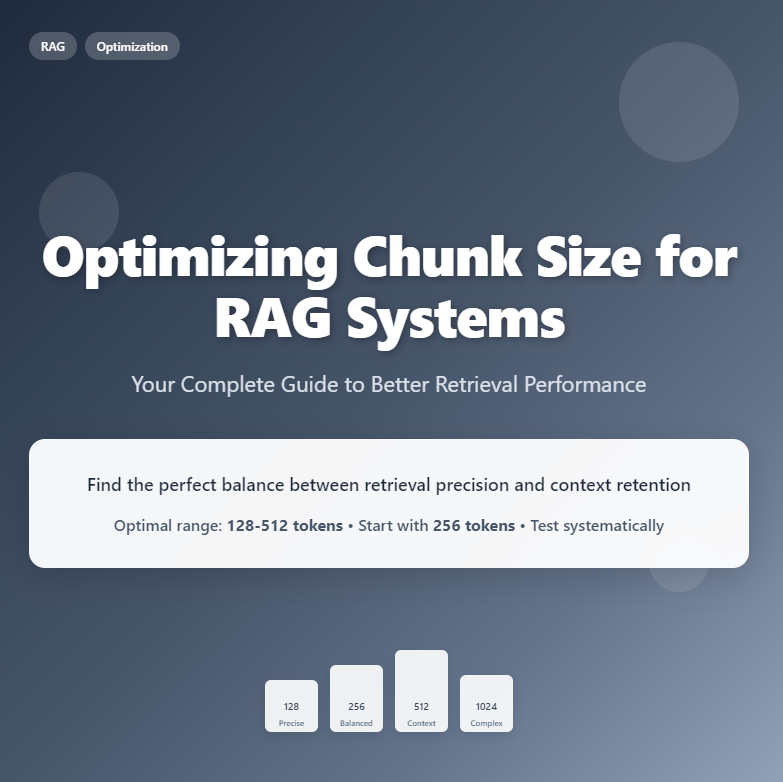

Optimal chunk size for RAG systems typically ranges from 128-512 tokens, with smaller chunks (128-256 tokens) excelling at precise fact-based queries while larger chunks...

28 min

28 min

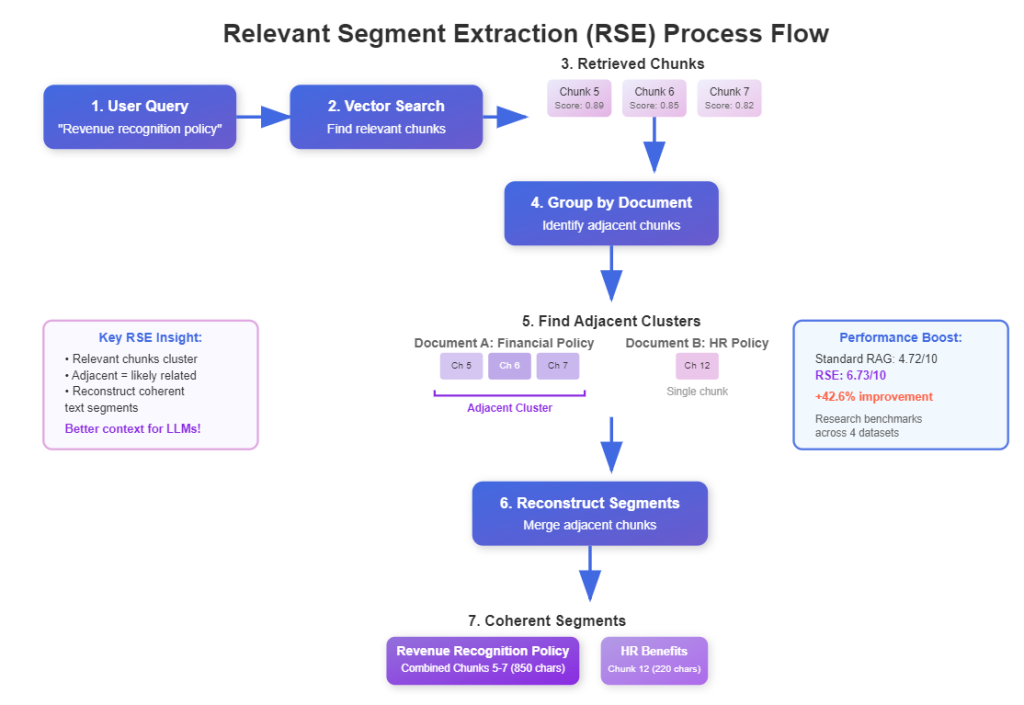

Relevant Segment Extraction (RSE) is a query-time post-processing technique that intelligently combines related text chunks into longer, coherent segments, providing LLMs with better context...

RAG (Retrieval-Augmented Generation) with CSV files transforms your spreadsheet data into an intelligent question-answering system that can understand and respond to natural language queries...

Hypothetical Document Embeddings (HyDE) is an advanced technique in information retrieval (IR) for RAG systems designed to improve search accuracy when little or relevant...

I’m going to walk you through creating a Simple RAG system. But what exactly is RAG? RAG stands for Retrieval-Augmented Generation. Think of it...

Ollama is a tool used to run the open-weights large language models locally. It’s quick to install, pull the LLM models and start prompting...

The step-by-step path used by 25,000+ learners to go from zero to career-ready in AI/ML.

Book a free guidance call and our team will help you find right starting point for your AI/ML journey.